Projects

Peter's parking project

January 2014 - ongoing

petersparkingproject.com

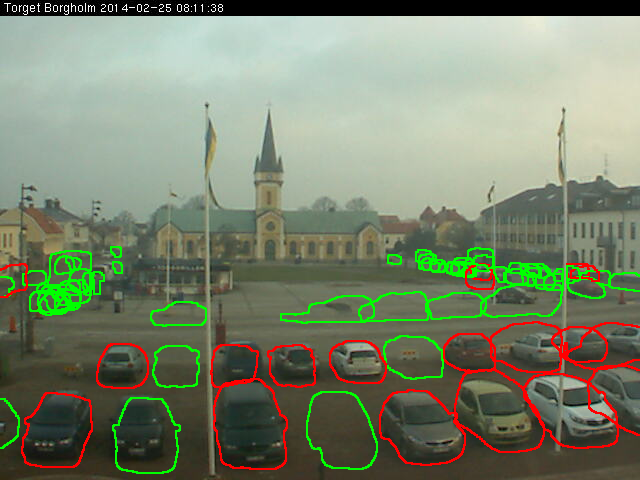

A smart city project using computer vision and networked cameras to find spots and elminate parking pain forever.

After several months of iterations I constructed a successful prototype using a variety of computer vision techniques and applied it to the city of Borgholm, Sweden. The prototype does not perform well right now as environmental conditions (locations of parking spot, seasons) have changed since it was built and optimised, see above for images of when it was newly built and kicking ass.

The next version includes 7 webcams from across the world, and a more advanced ML approach which will improve accuracy and robustness substantially.

TrackerKeeper

May 2014 - ongoing

trackerkeeper.co

![]()

A website with the objective of being a single place for keeping track of the multimedia links one consumes day to day, sharing them with others, and organising them into playlists.

I currently have live a website I prototyped in about four hours using google forms and some hacky jquery, which was a fun and very rewarding experience. It also inspired Chris Miceli to build the terrifying and aptly named SheetyFS, a file system built out of Google Spreadsheets.

The next steps for the tool are replacing google forms with a backend that can fetch metadata from youtube and soundcloud which should improve the user experience a lot. That will also mean it will have a real auth system. Along with that would be simple playlist management and some performance tuning.

Basil Framework

August 2013 - January 2014

basilframework.com

Basil is a backend as a service that aims to make web development better by providing typical web application requirements such as login, file uploading, and permissions, as interfaced components that can link to both the backend and frontend of your application.

I was selected to pitch Basil as a finalist at the USYD Genesis business plan competition in 2013 using this slide deck.

I spent a lot of time learning about CORS, thrift, ORMs, designing the platform, and prototyping a first version in python, but have not had time to get to version 1 (preferring other projects). This is a big one.

There is an absurd amount of replicated code in the world. A quick examination of any popular set of applications or websites reveals that they are all offering very similar functionality, and yet the code is virgin. Login, logout, linking social accounts, uploading and viewing images and files, permissions systems, search functions, and so on.

Proof of the problem is in the popularity of the various services and tools that attempt to provide such usefulness out of the box. Rails is probably the best for attempting to address the problem, but the quality of Gems in functionality and security is not always good. On the Saas side there is Parse, AppBuddy, Firebase and many others, targeting mobile apps in particular, where the narrow requirements found across apps makes the issue of code duplication even more painfully obvious. These companies have done well (I believe they've all been sold to various BigCorps now), but they lack appeal in building business applications. They don't guarantee they won't shut down one day (like FireBase, Parse soon), may own your data, and it's not easy to extend their functionality in your own backend.

Basil aims to combine the strengths of these two approaches and mitigate the weaknesses. It is a software package, available for free, with minimal configuration, and available as a thrift service to your application. When you are ready to scale, you can export the service with one click to the cloud.

Harvest

Early 2012

Collaborated with James Somers to design Harvest, a machine learning platform where you could upload your feature vectors and have a classification algorithm applied to them much faster than using a desktop application such as Weka. The idea got as far as us implementing a fast threaded random forest in C++, and spending a lot of time reading about all the hot-shot companies around the world who were popping up doing the exact same thing, like SkyTree, Microsoft Azure and BigML

During my honors thesis I undertook to apply time series classification techniques to astronomical data. This involved running long machine learning experiments to explore the classifiation problem and verify my results. Although I could do other work while waiting for programs to finish, the turnaround time on the experiments was long enough to be disruptive to my thinking and attempting to solve the problem.

I was using Weka, a machine learning toolkit written in Java and produced by the University of Waikato. All credit to the Waikato guys, but Weka's algorithms are mostly for learning and lightweight experimentation, and they do not scale well. Frustrated with the slowness of my tools, I wondered at the potential of a cloud based service to make machine learning research faster. Machine learning classification algorithms have various strengths and weaknesses, but the structure of the input for most popular algorithms is almost the same.

- Input consists of a vector of features either nominal or real valued. Nominal values e.g. 'A', 'B', 'C'. Input is separated into training, testing and unseen data (when applying the algorithm)

- Output consists of a class label applied to each vector

So regardless of which algorithm you intend to use you may simply upload your input, run it through the platform, and download the output or a performance report.

Men's Style Forum Analysis

September 2013 - ongoing

bitbucket.org/urbanophile/sfscraper

Collaborated with Matt Gibson to scrape the complete post history of men's style forum. Data cleaning has been done with some analysis and insight extraction still to come. Here are a few ideas we want to pursue:

- Extracting fashion 'facts' using off the shelf fact extraction e.g. 'pants and belt must match'

- Doing basic analysis like watching certain categories of clothing change in popularity of seasons, mentions of color, or dominant colors in uploaded images

- Developing an impression of the favourite stores on the website, and brief summaries of their most liked products (e.g. Loake brand brogue shoes)

4chan Analysis

2012, someday

bitbucket.org/peterashwell/4chan-analysis/src

Scraped several months of popular 4chan board /b/ with the objective of filtering out the typical low-quality content and extracting creative and interesting posts. Culminated with me having 3 months of images and posts on disk, and unfortunately I never found time to try to rank and filter them. I'm still interested in long-term archivial of internet communities and will probably revisit this at some point.

2011 Google AI Challenge - Ants

ants.aichallenge.org/profile.php?user=12556 - my bot, ‘antsbot’, in action

Collaborated with James Somers to build a successful AI bot in python for the 2011 Google AI challenge, a simple RTS game played against other people's programs from around the world. The bot was based on a simple diffusion field algorithm with some performance tweaks. It performed well, placing 308 out of 7897 entrants.

Amusingly, whoever built the battle system still has it running, so my bot and all the others fight there everyday like it's some kind of digital Valhalla.